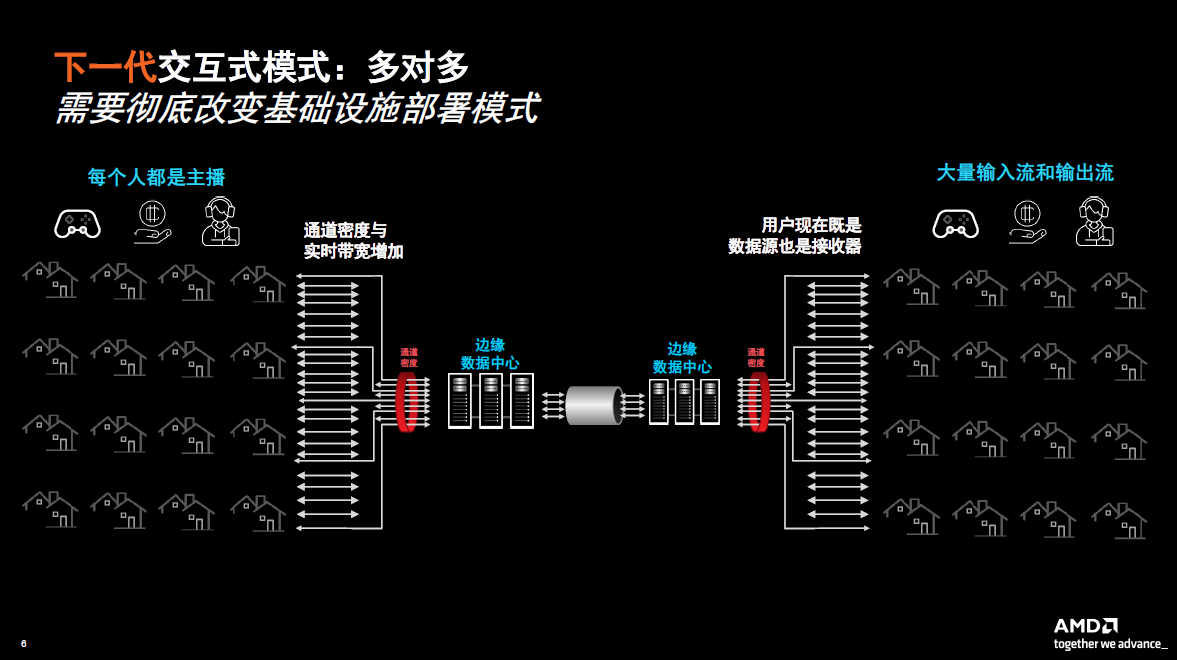

Compared with the traditional live broadcast scenario, the next generation live broadcast scenario is mainly a many-to-many model, that is, everyone is the anchor, both a data source and a receiver. Such scenarios include online viewing, live shopping, online auctions, and social streaming media. Such application scenarios require data processing to be closer to users and require such processing to be moved to the edge of the network.

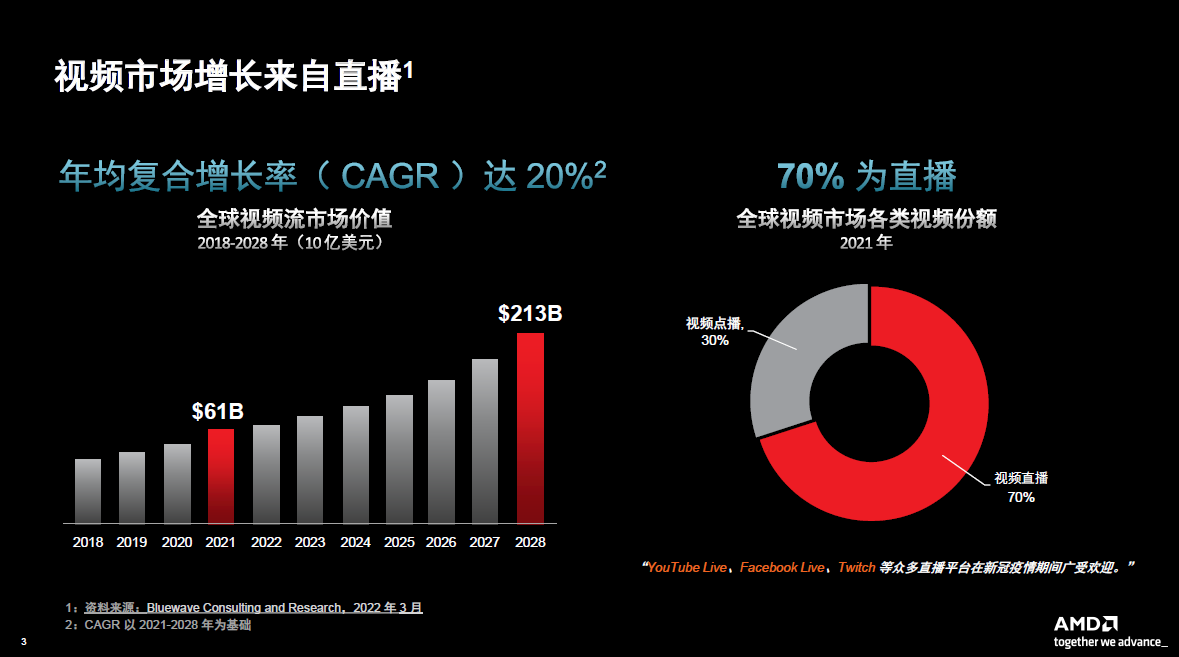

In the live broadcast market, both in terms of revenue and infrastructure deployment, growth is very rapid. Data in 2021 shows that more than 70% of the global video market is dominated by live content.

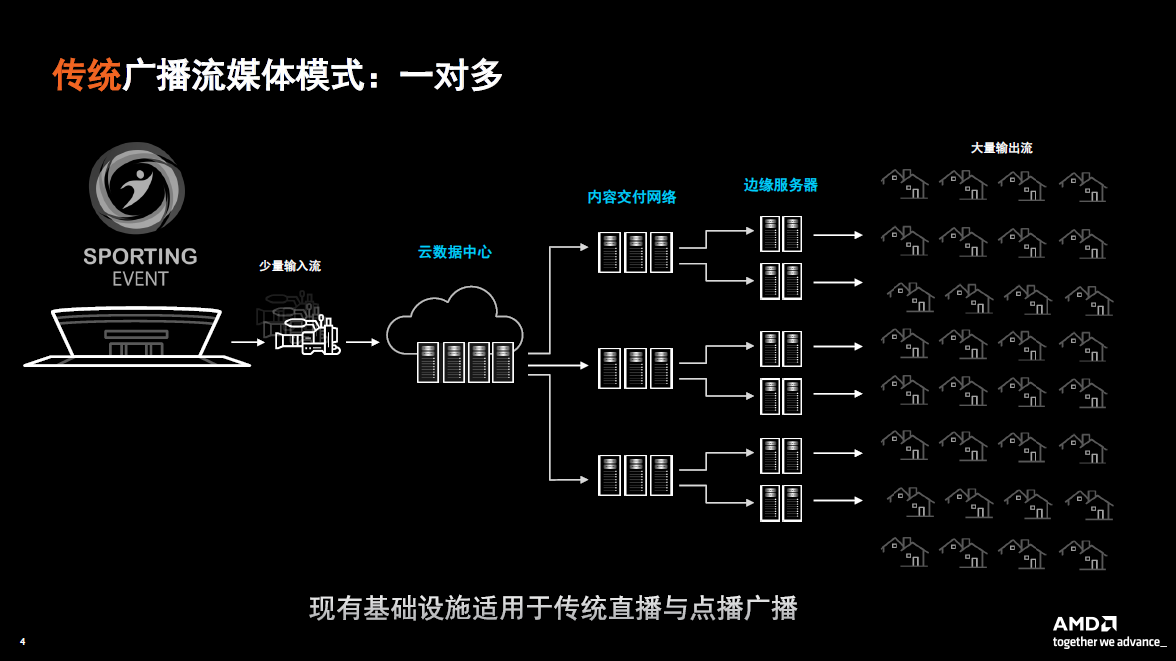

Currently, traditional broadcast streaming is mainly supported by software and CPU. Traditional live broadcast activities mainly adopt a one-to-many model. Since the number of video streams is relatively small and the delay is relatively controllable, more traditional existing network forms can be used to support live broadcast services.

Compared with the traditional live broadcast scenario, the next generation live broadcast scenario is mainly a many-to-many model, that is, everyone is the anchor, both a data source and a receiver. Such scenarios include online viewing, live shopping, online auctions, and social streaming media. Such application scenarios require data processing to be closer to users and require such processing to be moved to the edge of the network. Handling these application scenarios at the edge means that the economic benefits that can be obtained through cloud concentration no longer exist, so it is necessary to completely change the infrastructure deployment model.

As current live streaming requirements for latency are getting higher and higher, and the cost of deployment at the edge is also increasing, this has driven the industry to develop a new generation of live interactive streaming solutions. Such real-time, interactive streaming media application scenarios require low latency and large capacity. Only new architecture can adapt to the cost pressure brought by these changes.

Recently, AMD's video transcoding division launched a new media accelerator card Alveo MA35D, which includes solutions from chip to board to software. According to Sean Gardner, director of AMD video strategy and market development, Alveo MA35D is optimized for a series of new application scenarios. In the name of this product, MA stands for Media Accelerator, 35 stands for the new generation product after Alveo U30, and D stands for two (dual) video processing units.

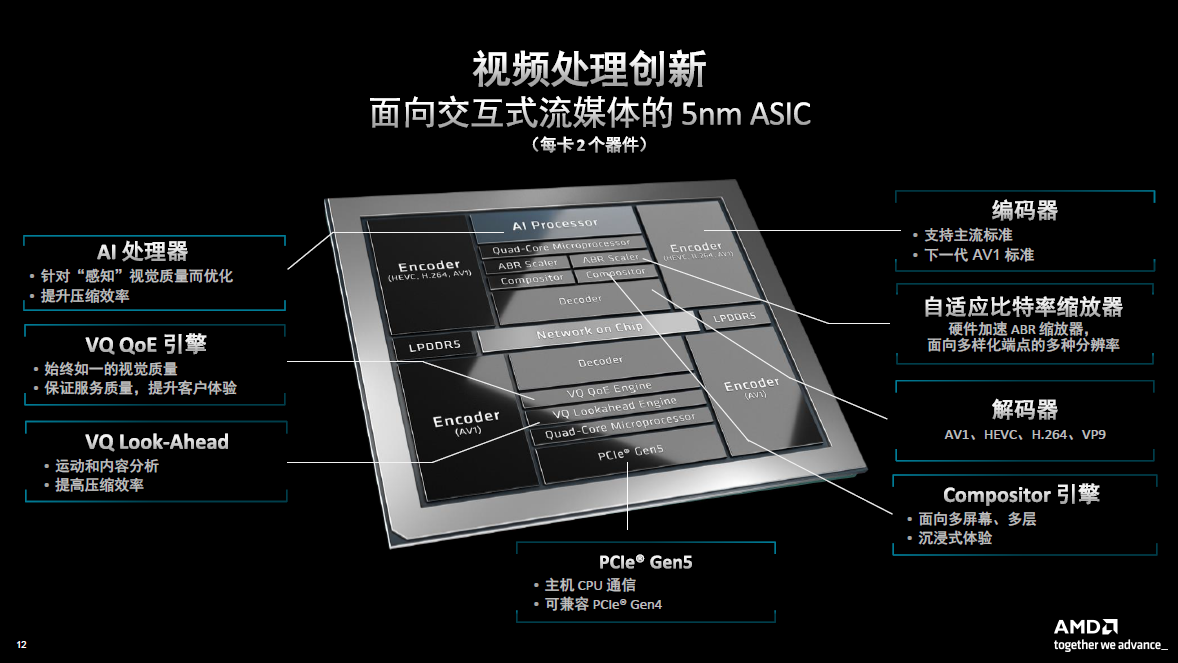

The Alveo MA35D media accelerator card features two 5nm ASIC-based video processing units (VPUs) that support the AV1 compression standard and is designed to promote a new era of large-scale live interactive streaming services.

Alveo MA35D can significantly improve economics, making new application scenarios commercially viable. For example, it has both high-density, ultra-low latency processing units and artificial intelligence empowerment. The Alveo MA35D card can provide up to 32 channels of 1080p60 transcoding density per card at 1W per stream. Alveo MA35D’s 4K encoding latency is as low as 8ms, which is only half of the conventional processing time (16ms). Alveo MA35D has 22 TOPS AI computing power (INT8), can support many new application scenarios, and can well meet our customers' industry expectations. "At the same time, we must also ensure the cost-effectiveness of the Alveo MA35D, so the suggested retail price of the Alveo MA35D is also very attractive." Gardner added.

By comparing with the previous generation product Alveo U30, we can see that the channel density of Alveo MA35D has increased by 4 times, the power consumption has been reduced by 3 times, and the latency has been reduced by 4 times. The Alveo MA35D delivers excellent performance in every aspect, but also has many additional features and new capabilities.

"In the process of developing Alveo MA35D, we have worked very closely with relevant customers from concept to algorithm to design, to card to solution. Through collaboration, we hope to ensure that in most application scenarios, the various benefits embodied by the product can be achieved." Gardner said.

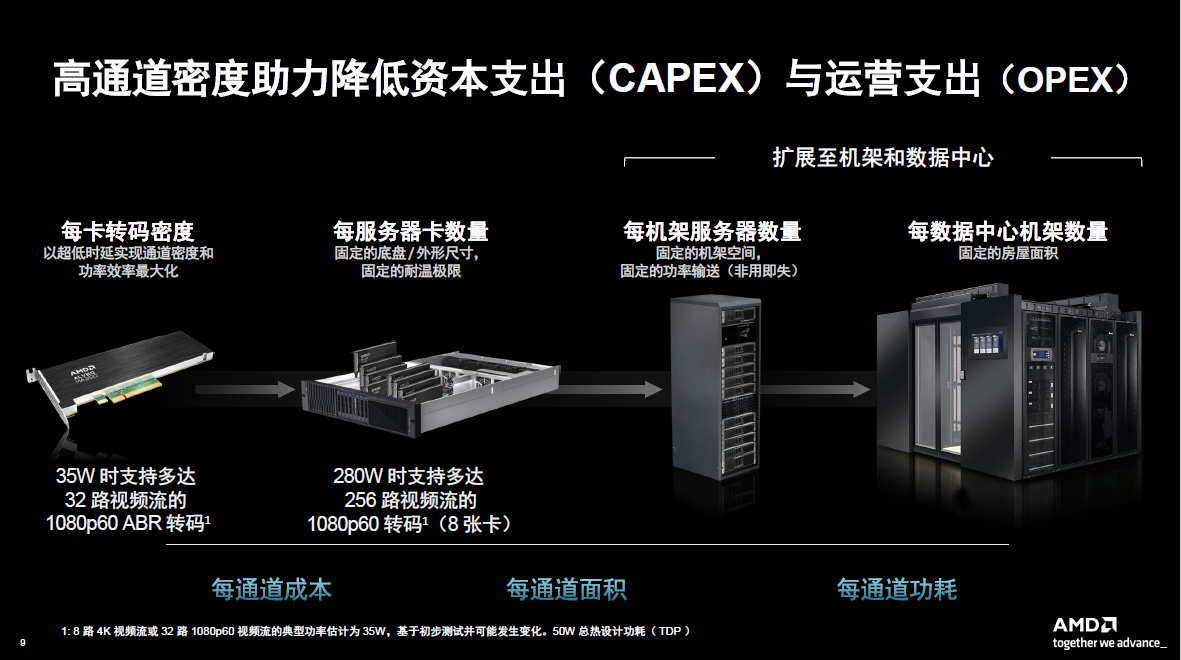

To realize the related advantages of Alveo MA35D, it also needs to be realized by streaming media suppliers in the process of deploying next-generation applications. "At the card level, during the design and optimization process, we foresee the impact that Alveo MA35D will have on how customers view our solutions. Customers' infrastructure during deployment is fixed, such as floor space and processing power consumption. So we have also optimized all 32 channels," Gardner said.

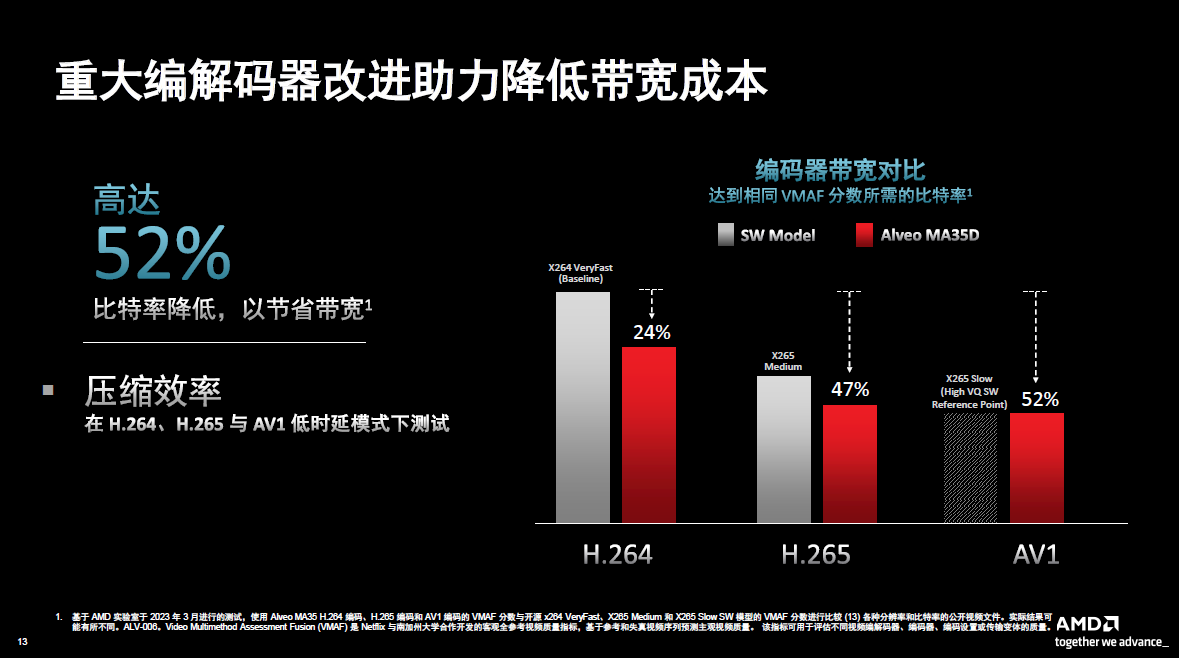

“A 1U rack server with 8 cards provides 256 channels to maximize transcoding density per server, per rack, or per data center. The data shows that the cost per channel is $50 and the power consumption per channel is 1W. Since we use very The advanced codec can save up to 52% of bandwidth per channel. When customers evaluate efficiency, they mainly look at the cost per square meter and the power consumption of each channel. Our solution is very cost-effective in terms of price and performance.”

How Alveo MA35D achieves the above-mentioned excellent performance

First, thanks to the Alveo MA35D’s new dedicated video processing unit (VPU). There are four separate encoder (MP) unit modules that support the AV1 compression standard in the four corners of the chip. This allows customers to enjoy maximum flexibility when deploying applications. This allows customers to use the old standard while adding the new one when deploying a new compression standard. When optimizing and developing the encoder algorithm, it is also necessary to ensure that acceleration can be optimized so that the processing performance can adapt to the entire video processing process. Many current and future application scenarios will involve decoding scaling and synthesis, all of which must be hardware accelerated by Alveo MA35D to ensure that each channel has the highest density, lowest power consumption and lowest cost. In addition, it also superimposes artificial intelligence and machine learning modules. Through such tools, it can ensure that the video quality is improved while reducing the bit rate when doing video processing.

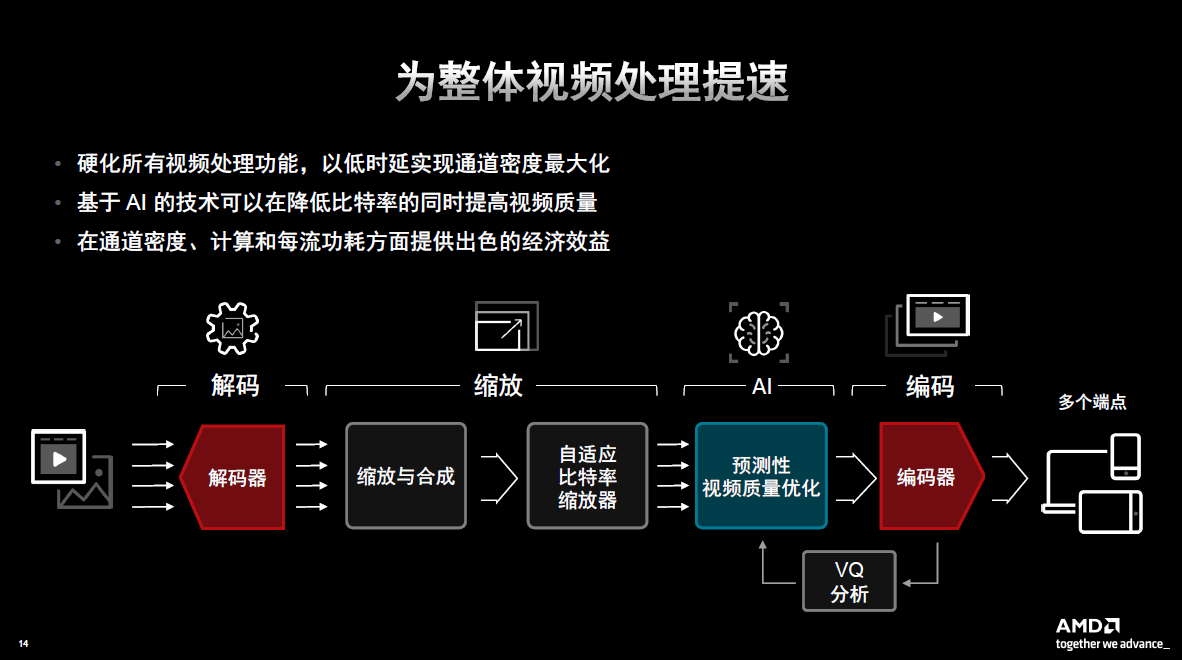

Gardner pointed out that customers want to achieve the highest processing efficiency at the lowest cost and lowest power consumption per channel, so the Alveo MA35D is very attractive to customers, especially compared to other processing solutions on the market. The figure below shows its very advanced video processing process, which can support various application scenarios in the future.

All of this processing is done on the chip and card, ensuring the highest density is maintained to achieve the highest efficiency and economy for customers.

According to Gardner, traditional video processing solutions, both in terms of setup and deployment, assume the worst-case scenario. That is, if it can handle the assumed worst-case scenario, then a slightly better scenario will not be a problem. But the problem with this approach of designing or deploying worst-case scenarios and conditions is that it is very inefficient and can be very costly. Therefore, AMD introduced artificial intelligence to analyze the content of the video in the innovation process of Alveo MA35D. Coupled with the artificial intelligence and machine learning capabilities of Alveo MA35D, you can better understand the characteristics of the video, such as the complexity and type of the video, whether it is a synthetic computer game or some natural content.

With the insights and intelligence gained from artificial intelligence and machine learning, this dynamic content can be delivered to the encoder with greater efficiency. In this way, bandwidth and storage requirements can be reduced while improving efficiency when doing dynamic video processing.

Take the evening news as an example. In the general evening news, there will be a host broadcasting to everyone in the form of a big avatar. However, during this process, it may switch to a sports event such as a car race, which will generate a lot of dynamics, and then switch back to the host's screen. As just introduced, when the host is hosting the scene, artificial intelligence can configure the encoder to reduce the bandwidth, but when switching to a sports event, real-time dynamic adjustments can be made. The result is a highly intelligent, dynamic and optimized video processing process that can scale at very low power and cost.

However, artificial intelligence is not perfect, so when making dynamic adjustments, there may be some inaccuracies or errors in judgment. So one of the innovations AMD has made is the VQ analysis IP module. This IP module will form a feedback loop during the dynamic adjustment and change process of artificial intelligence to ensure that the decisions made are not wrong. Through VQ analysis, the quality of each frame of the video can be ensured, and if an error occurs, it can be adjusted in time. "Although similar solutions have been implemented in traditional models, we are still pleased to see that this solution can be implemented in such real-time and very low-latency application scenarios." Gardner added.

Bandwidth consumption is a very large operating expense for streaming media customers. AMD is also working on improving its codecs. As shown in the histogram in the figure below, the left side is the benchmark. A line lower than this indicates bandwidth savings. Of course, in this process, there is an assumed standard, that is, the quality of the video can reach the usual level. Therefore, through comparison of these references, we can see that the AV1 encoder can achieve the same visual quality, but its bandwidth saving is as high as 52%.

Now that we understand how artificial intelligence and machine learning can provide solutions in quality analysis of videos, let’s look at a new advantage. The two images below were both compressed at the same bitrate, but the image without AI technology has more artifacts and is less clear. If both images used the same bandwidth, the quality of the one on the right would be significantly better than the other.

Human faces can be found through artificial intelligence technology, and then more bits are allocated in the key area of the face, and bits are allocated in other areas. In this picture, the part of the face is called the key area. Through artificial intelligence, we can know which areas are key and allocate different resources during processing. This allows customers or streaming service providers to do more aggressive compression and reduce bitrates while maintaining quality. The key areas are not just faces, sometimes testing and detection of text are also equally important. For a video, it is very important to have a portion of small text to ensure its clarity, Gardner said.

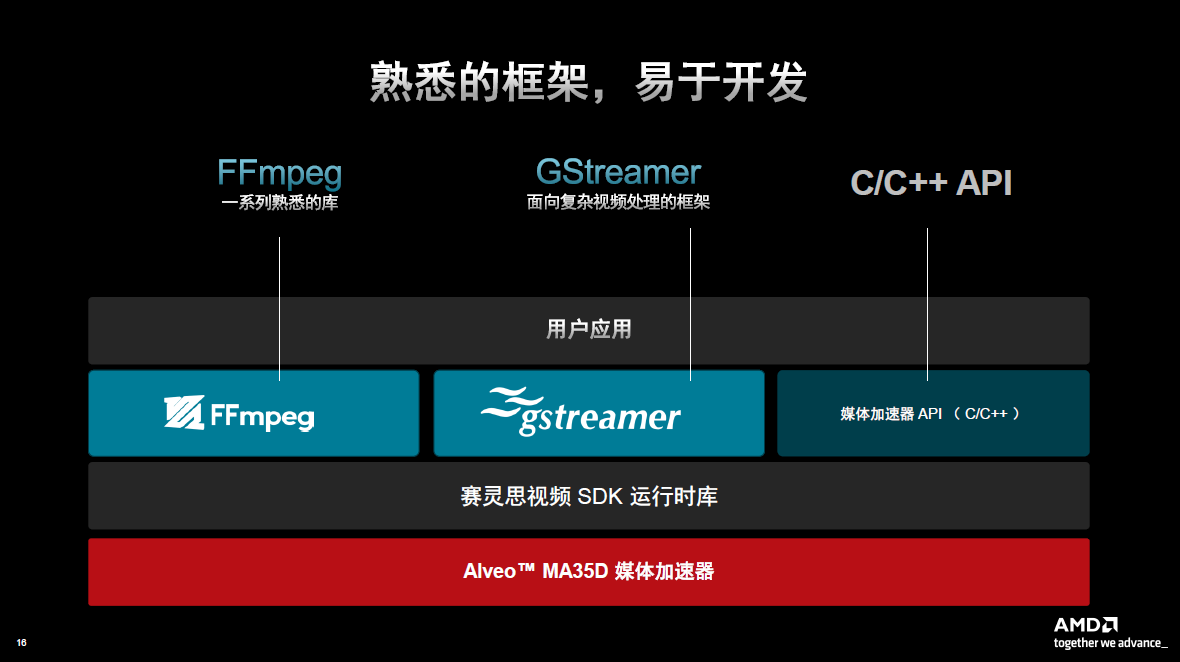

When doing video processing, it is important to be cost-effective, but the most important thing is to be able to use this technology. Accessible through the AMD Media Acceleration Software Development Kit (SDK), the platform supports the widely used FFmpeg and Gstreamer video frameworks for ease of development. Some customers have their own frameworks, and they will also connect an interface with AMD's media accelerator API.

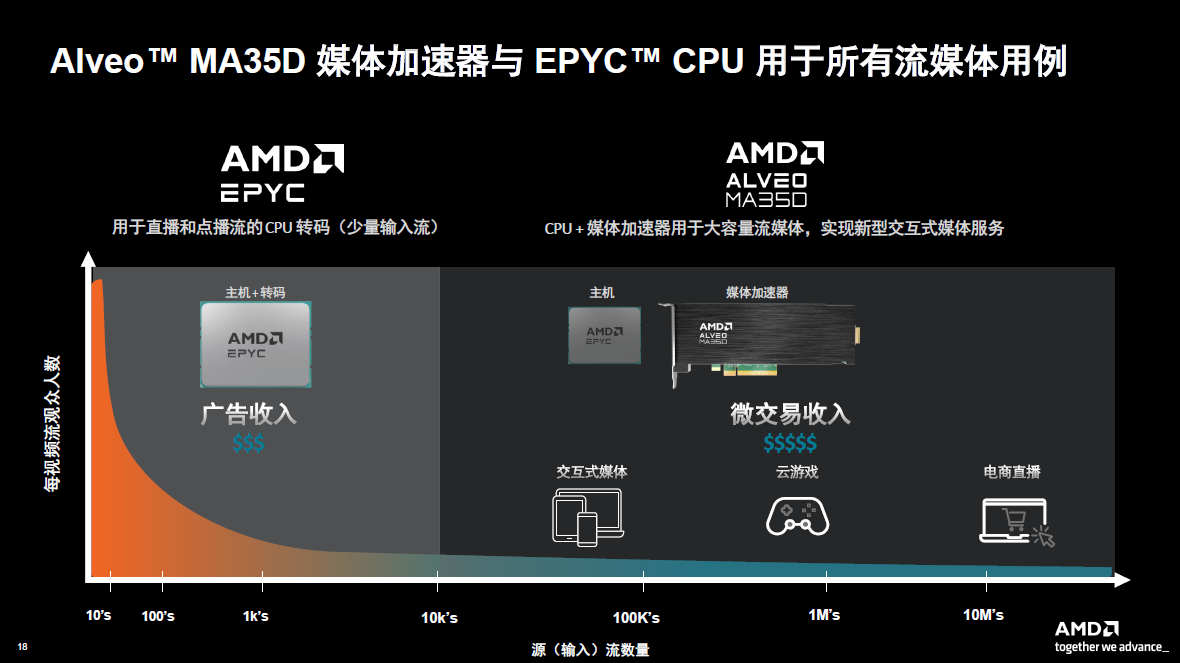

Alveo MA35D is not a competitive product for AMD's CPU and GPU, but a complementary product. All of these products have their own merits and are very efficient. CPUs can provide very high performance compression. But if you are dealing with millions of streaming videos, the economics are not high. If there are application scenarios that require image rendering, GPU is the best tool. There are also some applications that require the collaboration of all three to provide a very cost-effective and high-performance solution. For example, in cloud e-sports or cloud games, the GPU can present as much game content as possible, the Alveo MA35D completes all low-latency and high-quality encoding, and the EPYC CPU can complete all application-level system processing. This combination can provide customers with the highest density at a very favorable price and low power consumption.

The figure below further explains that EPYC CPU is excellent for a certain type of APP, and Alveo MA35D is very suitable for other types of applications. In general, the software and CPU are suitable for a relatively small number of streaming videos, and the Alveo MA35D is suitable for interactive scenarios with millions of streaming videos.

Samples of the Alveo MA35D media accelerator card are available now, with volume shipments expected in the third quarter. Finally, in general, the Alveo MA35D was developed from the ground up by AMD and is optimized for high-capacity, interactive streaming, with the goal of achieving high cost-effectiveness in both capital expenditures and operational expenditures.